In this post, I will start by presenting some famous optimization algorithms. In a second step, I will give some interesting connections between them, allowing the reader to get a better understanding of the field.

I. Greedy methods

II. Primal methods

- Gradient descent

In this section, we consider a function ![]() , that is differentiable and convex, and we tackle the problem:

, that is differentiable and convex, and we tackle the problem:

![]()

provided with an initial point

![]()

of

![]()

where

| Drawbacks | Advantages |

| Often slow | Each iteration has small cost |

| It is a first order method |

- To address the first issue, methods to improve convergence are:

quasi-Newton methods – conjugate gradient method – accelerated gradient method. - To address the second issue, methods for nondifferentiable or constrained problems are:

subgradient method – proximal gradient method – smoothing methods – cutting-plane methods.

2. Quasi-Newton

The Newton method comes from minimizing a second-order approximation of ![]() around

around

![]()

leading to the update rule

![]()

If the Newton method demonstrates fast convergence, it has the disadvantage to be expensive for large scale applications. To overcome that, the Hessian can be approximated by a metric

Hence, the Quasi-Newton methods differ by considering different way to compute the sequence of matrix

3. Subgradient method

From now on, we no longer require ![]() to be differentiable (but

to be differentiable (but ![]() is still convex). Subgradient method is certainly the simplest method for minimizing

is still convex). Subgradient method is certainly the simplest method for minimizing ![]() . It is similar to gradient descent but replacing gradients by subgradients.

. It is similar to gradient descent but replacing gradients by subgradients.

![]()

where

Remark : Contrarily to a negative gradient ![]() , a negative subgradient

, a negative subgradient ![]() is not a direction of descent in general. This means that the subgradient method is not a descent method (

is not a direction of descent in general. This means that the subgradient method is not a descent method (![]() can occur).

can occur).

4. Proximal gradient method

Optimization problems in machine learning are often of the form:

![]()

where

algorithms (compared to subgradient methods) even though the global function

Definition (Proximal operator). Let ![]() be a proper, lower semi-continuous convex function. The proximal operator of

be a proper, lower semi-continuous convex function. The proximal operator of ![]() is defined by:

is defined by:

![]()

(By strong convexity, the arg min exists and is a singleton, so proxg is well defined.)

The following algorithm is workable in the setting described previously, that is when:

is convex and differentiable;

is convex and differentiable;![Rendered by QuickLaTeX.com g : \mathbb R^d \to (-\infty,+\infty]](https://quentin-duchemin.alwaysdata.net/wiki/wp-content/ql-cache/quicklatex.com-8e4582a434c205b8a5958c3347a08380_l3.png) is convex with an easy-to-compute proximal operator.

is convex with an easy-to-compute proximal operator.

![]()

where

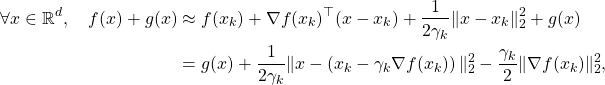

The interpretation of the proximal gradient method is very similar to the one of the gradient descent. It consists in minimizing a local quadratic approximation of ![]() plus the original non-differentiable function

plus the original non-differentiable function ![]() :

:

where

5. Douglas-Rachford method

Here, we focus on the optimization problem:

![]()

where

![]()

where

| We can derive three special cases of the proximal gradient method: – when – when – when |

III. Primal-Dual methods

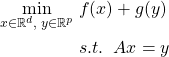

Let us consider the optimization problem:

minimize

![]()

where

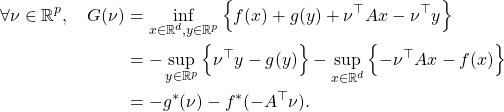

The dual function to this last problem is:

Therefore, the dual problem of interest is:

(1) ![]()

Theorem

Let ![]() ,

, ![]() be proper convex functions and

be proper convex functions and ![]() . Assume that either

. Assume that either ![]() or

or ![]() and that

and that ![]() .

.

If the optima are attained in Problem \eqref{P10} and Problem \eqref{P11}, then strong duality holds:

![]()

Moreover, a primal-dual optimum is a solution to the saddle-point problem:

![]()

We have seen previously that the proximal gradient method, used for minimizing a composite objective function, reduces to:

- the gradient method when

- the proximal point method when

.

.

In this section, we exploit these two simple algorithms with the dual problem \eqref{P11} to devise primal-dual methods.